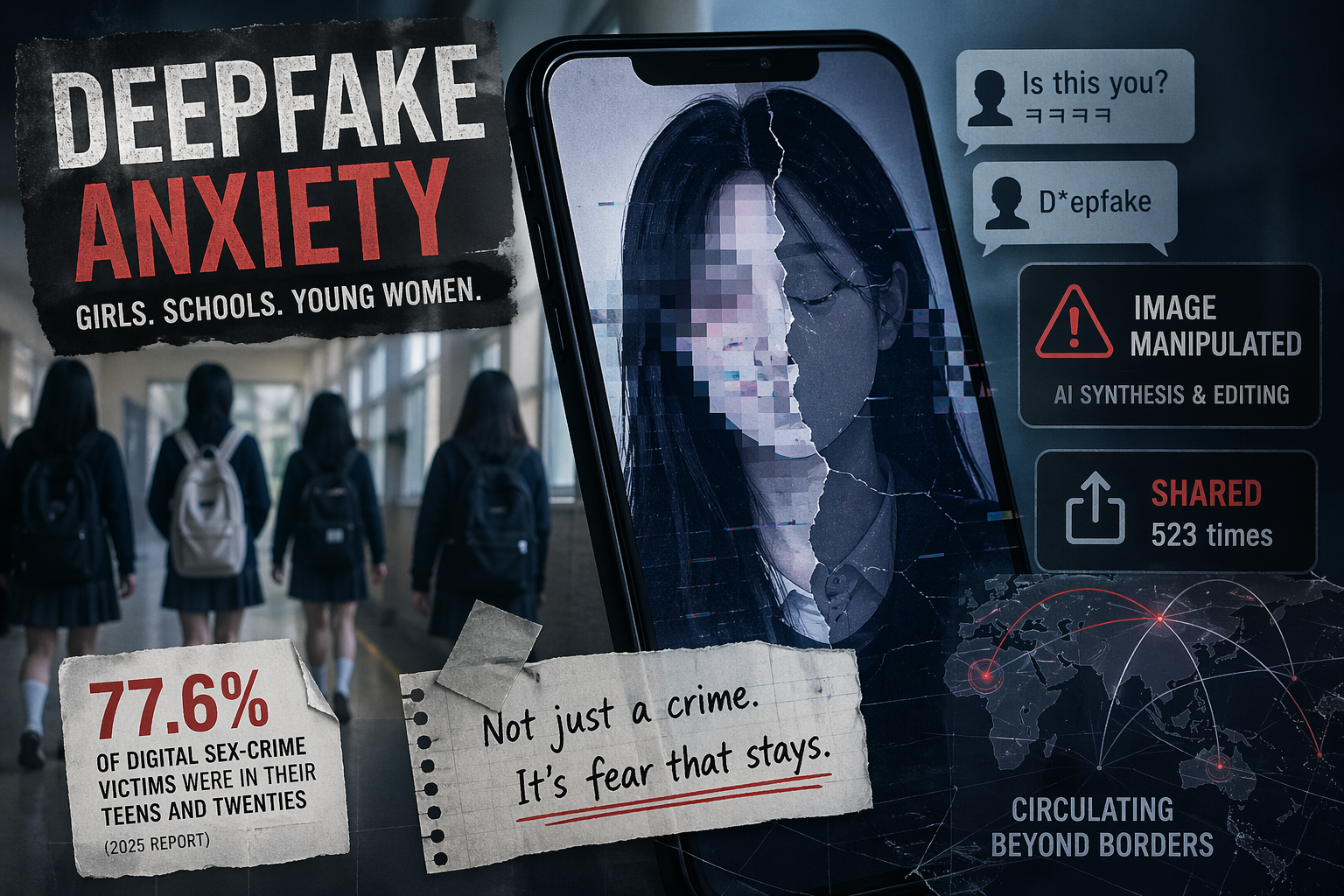

New government-linked reporting showed that 77.6 percent of digital sex-crime victims supported last year were in their teens and twenties, while deepfake-style “synthesis and editing” cases remained heavily concentrated among the young.

South Korea’s deepfake anxiety has stayed intense because the issue is no longer read as a narrow cybercrime story. In mid-April, government-linked reporting showed that the Central Digital Sex Crime Victim Support Center assisted 10,637 victims last year, and that 77.6 percent of them were in their teens and twenties. The figures kept the issue squarely in public view not only because of the scale, but because they reinforced a wider social fear: young people remain the most exposed in an image environment where photos can be copied, altered, and redistributed with little warning.

The strongest sign of that fear may be what victims said they were experiencing. According to the Seoul Economic Daily’s English report on the government data, “fear of distribution” was the most common type of harm, ahead of illegal filming, direct distribution, and distribution threats. At the same time, new victim cases fell while ongoing support cases rose, suggesting that digital sex crimes continue to linger through repeated circulation and follow-up damage even after an initial incident. That pattern helps explain why public anxiety has remained elevated: the threat is not only the making of abusive material, but the sense that it can keep coming back.

Deepfake-style abuse has become one of the clearest symbols of that broader unease. Yonhap reported that “synthesis and editing” cases, the category most closely associated with AI-assisted sexual manipulation, were especially serious among younger victims. Seoul Economic Daily’s English edition reported that such cases rose 16.8 percent year over year, and that 91.2 percent of victims in that category were in their teens and twenties. Female victims also vastly outnumbered male victims in those cases. Korea JoongAng Daily therefore framed the story less as a general digital-risk issue than as a growing online threat aimed at young women.

That is also why schools remain central to the conversation. Kyunghyang Shinmun reported that 37 of 180 digital sexual-violence cases recorded by the Seoul Metropolitan Office of Education from 2023 to 2025 were handled at the principal level, and it highlighted a sexual-composite-image case that was treated as resolved because there was no proof of further distribution and the image had already been deleted. The paper also noted that teen girls’ share of supported victims has risen sharply over time, strengthening the perception that school-age girls are bearing a disproportionate burden. In this framing, the public concern is not just that deepfakes exist, but that institutions may still be responding too weakly or too late when the victims are young.

The result is that deepfake panic in Korea now sits at the intersection of gender, youth, and institutional trust. Girls and women in their teens and twenties are not simply appearing in the numbers; they are shaping how the public understands the issue. The question many readers are left with is no longer whether deepfake abuse is real or widespread. It is whether schools, investigators, and support systems can respond quickly enough in a media environment where deletion is difficult, repeat circulation is common, and the fear of exposure has become part of ordinary life for many young women.

Leave a Reply